AI in Custody Decisions: What Judges Consider

AI is becoming a tool to assist U.S. family courts in custody decisions, focusing on the "best interests of the child." These systems analyze data like work schedules, school calendars, and parental involvement to provide evidence-backed recommendations. Judges retain full decision-making authority, using AI as a support tool.

Key takeaways:

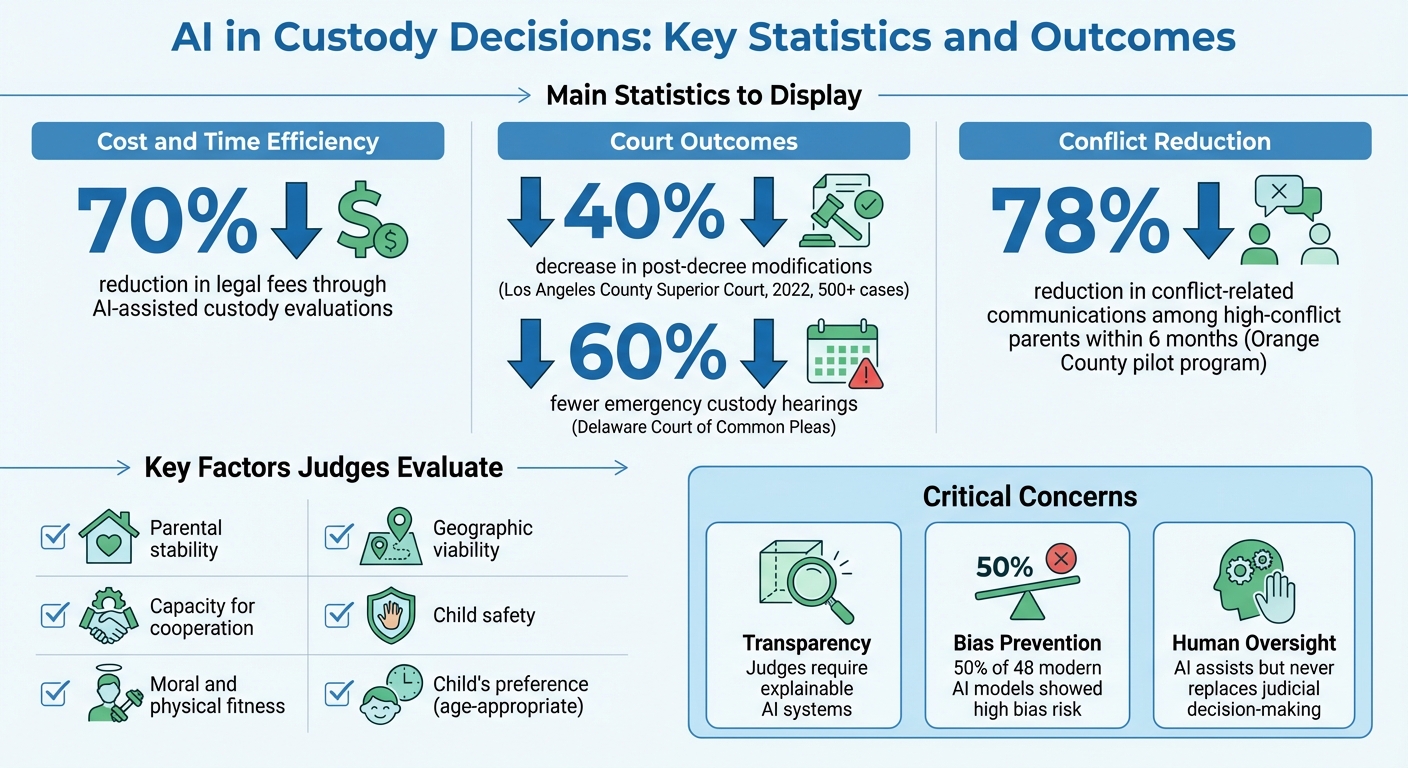

- AI tools reduce time and costs in custody evaluations, cutting legal fees by up to 70%.

- Courts using AI have seen a 40% decrease in post-decree modifications and fewer emergency hearings.

- Transparency and bias remain critical concerns; judges demand explainable and unbiased AI systems.

- Tools like Coflo help streamline custody planning by offering clear, research-based insights tailored to families' needs.

While AI aids efficiency, courts emphasize human oversight to ensure fairness and accountability.

AI Impact on Custody Decisions: Key Statistics and Outcomes

The 'Best Interests of the Child' Legal Standard

When it comes to custody decisions in U.S. family courts, everything revolves around one guiding principle: the "best interests of the child." This standard ensures that a child's safety, stability, and emotional well-being are prioritized above all else. As The Schreck Law Group puts it:

"The 'best interest of the child' is the ultimate goal."

AI tools are designed to complement this principle, not replace it. They analyze the same factors judges traditionally consider - such as parental fitness, stability, cooperation, and developmental needs - offering objective insights to support judicial discretion. These tools don't make decisions but provide data-driven evidence to reinforce well-founded legal judgments.

What Judges Evaluate in Custody Cases

Judges weigh several factors when deciding custody arrangements, with parental stability often taking center stage. This includes evaluating whether parents maintain consistent routines, take responsibility for daily tasks like managing doctor visits or school activities, and meet the child's basic needs for food, shelter, and healthcare.

Another key factor is the capacity for cooperation. For instance, Florida law highlights "the child's relationship with each parent and the time each parent spends with them", emphasizing the importance of fostering a positive co-parenting dynamic.

Judges also assess moral and physical fitness, considering issues like mental health, substance abuse, and criminal history. Geographic viability - such as commute times and proximity to schools and extracurricular activities - is another critical element. Above all, safety is paramount; verified evidence of abuse or neglect can heavily influence custody arrangements. In some cases, a child's preference may be considered if they are deemed mature enough, but this generally carries less weight than objective factors like stability and safety.

How AI Supports Traditional Evidence

AI technology steps in to streamline custody evaluations by processing data that would otherwise take significant time and effort to review manually. For example, AI can analyze communication patterns through approved parenting apps, identifying which parent responds promptly to scheduling requests and flagging instances of hostility. These systems can also verify visitation compliance using location data and track attendance at schools, medical appointments, and extracurricular activities.

Financial records are another area where AI proves useful. By analyzing spending habits, these tools can distinguish between essential expenses, like healthcare and education, and patterns that might indicate poor financial judgment. AI also incorporates data from school attendance, academic performance, and healthcare utilization to recommend schedules tailored to the child's developmental needs, aligning with principles from developmental psychology.

The results speak for themselves. In 2022, the Superior Court of California in Los Angeles County used an AI-driven parenting plan program for over 500 cases, leading to a 40% drop in post-decree modifications. Similarly, Delaware's Court of Common Pleas saw 60% fewer emergency custody hearings after adopting AI scheduling tools that factored in local traffic and school calendars. While AI doesn't make the final call on custody, it provides judges with valuable, evidence-based insights that complement traditional forms of evidence like witness testimony and psychological evaluations. These tools help uphold legal standards while paving the way for discussions on AI transparency and bias.

sbb-itb-a09457a

Transparency and Accountability in AI Systems

When AI steps into the courtroom, judges demand a clear understanding of how these systems operate. Custody decisions, which touch on fundamental parental rights, are subject to intense scrutiny. As Hon. Brian MacKenzie (Ret.) explains:

"Judicial legitimacy depends not only on fair outcomes, but also on the public's confidence that a judge's decisions are reasoned, ethical, and explainable."

Without clear explanations, parents are left unable to challenge AI-generated recommendations effectively. Judges need to trace the decision-making process, understand which factors were most influential, and confirm that the recommendations align with legal standards. Without this transparency, courts risk relying on tools they cannot fully evaluate, setting a high bar for what they expect from AI systems.

What Judges Expect from AI Tools

Judges require AI systems to provide full disclosure of their data sources, variables, and the weighting factors used in recommendations. For instance, they need to know whether geographic proximity was given more importance than parental cooperation - and by how much. This granular detail allows attorneys to scrutinize AI-generated recommendations just as they would a human expert's testimony.

Explainable AI (XAI) systems are designed to meet these demands by producing detailed reports. These reports show exactly which variables - such as work schedules, school proximity, age-appropriate holiday custody plans, or historical attendance - shaped a recommendation. Additionally, courts expect these systems to include audit trails that document how conclusions were reached, ensuring transparency for judicial review and appeals. In some cases, attorneys are required to certify that AI-generated documents have been independently reviewed for accuracy and compliance with legal standards.

Why Judges Reject Opaque AI Systems

Given these strict requirements, opaque systems - often referred to as "black-box" algorithms - are frequently rejected by family courts. These systems conceal their internal logic, making it impossible for lawyers to challenge the reasoning behind the recommendations. This lack of transparency creates a clash between AI developers' desire to protect trade secrets and a parent's constitutional right to due process.

The risks of using opaque systems are not hypothetical. In early 2024, a Massachusetts judge fined an attorney $2,000 for submitting court documents with fake case citations generated by AI. Similarly, in June 2023, Judge Castel of the Southern District of New York sanctioned two attorneys $5,000 under Rule 11 in Mata v. Avianca, Inc. for filing a legal brief containing six fictitious case citations created by an AI tool. These incidents highlight the essential principle that human lawyers and judges must maintain full control over and verify AI-generated outputs.

Bias and Equity in AI Custody Recommendations

AI systems, even the most advanced ones, aren't immune to the biases they inherit. When these algorithms are trained on historical court data, they can unintentionally reflect decades of systemic inequities. For example, minority and low-income families have historically faced disproportionate child welfare investigations, and AI tools risk perpetuating these patterns unless developers and courts take active steps to intervene .

The problem is far from theoretical. A study of 48 modern AI models revealed that half of them carried a high risk of bias. This was often due to missing sociodemographic details or datasets that were skewed. In family law cases, early findings highlight troubling disparities: AI systems recommended interventions for Black and Hispanic families at significantly higher rates than for White families with similar risk profiles. These issues can emerge even when race isn’t explicitly included in the data. Instead, proxies like zip codes, employment patterns, or healthcare access can unintentionally encode racial biases.

How Judges Assess Bias in AI Tools

Courts play a critical role in ensuring AI systems deliver fair outcomes. Judges often require independent audits to confirm that algorithms are not reinforcing inequities . For instance, these audits might check whether AI tools suggest different custody outcomes for families with similar parenting skills but differing incomes or neighborhoods.

Additionally, judges emphasize the importance of diverse training datasets. These datasets should reflect a wide range of family structures, economic situations, and social norms to avoid repeating historical injustices. Valerie Benton of the Northern Kentucky Law Review underscores this point:

"Algorithms should assist, but never replace, human judgment in matters regarding fundamental rights".

This human oversight acts as a safety net, allowing professionals to override AI recommendations that seem unfair or inconsistent .

Common Bias Risks and How to Address Them

Different types of bias call for tailored solutions. For example, representation bias occurs when training data lacks diversity, leading to poor outcomes for underrepresented groups. Aggregation bias happens when a single model is applied across diverse populations without considering their unique needs. Meanwhile, measurement bias stems from inconsistencies in how data is collected across institutions.

| Bias Risk | Description | Mitigation Strategy |

|---|---|---|

| Socioeconomic Penalization | Algorithms may favor parents with higher incomes or conventional work patterns | Incorporate data features that reflect non-traditional jobs and community support networks |

| Historical Racial Bias | Training on past court records can perpetuate systemic inequities | Apply adversarial training and fairness constraints to reduce bias in historical data |

| Gender Stereotyping | Models may default to traditional caregiving roles based on gender | Conduct regular audits to ensure equal treatment of genders in custody decisions |

Some states are stepping up with legal protections. For instance, California’s Artificial Intelligence Bill of Rights requires bias testing and transparency for government AI systems. These regulations mandate algorithmic audits, fairness techniques like debiasing, and detailed documentation of how recommendations are generated.

How AI Tools Like Coflo Support Custody Planning

The challenges of bias and lack of transparency in legal processes have driven the development of AI tools designed to assist with custody planning. These tools aim to support both parents and judges by offering custody recommendations that prioritize the child's well-being while adhering to established legal standards. While they don’t replace human decision-making, they help streamline the custody planning process, making it less contentious and more focused on the child’s needs. By providing clear, data-backed insights, these tools create a foundation for smoother custody arrangements.

Coflo Features That Align with Legal Standards

Coflo employs an interactive weighting system and in-depth data analysis to craft personalized custody recommendations. Parents can adjust sliders for key factors like stability, equal time, and school consistency. The AI then evaluates hundreds of variables specific to the family - such as work schedules, distances between homes, school routines, and extracurricular activities - to generate tailored custody schedules.

These recommendations are rooted in developmental psychology, offering age-appropriate guidance. For example, toddlers may benefit from shorter, more frequent visits to maintain strong bonds, while teenagers might require more flexible arrangements to accommodate their social lives. Coflo also provides detailed explanations for its suggestions, ensuring that parents and legal professionals can understand how each plan supports the child’s need for stability and meaningful time with both parents.

This level of transparency is especially valuable in legal settings. Family courts that have implemented AI-driven parenting plan tools report improved outcomes and greater parental satisfaction. By fostering clarity and alignment with legal standards, these tools also help reduce tensions between co-parents.

How Coflo Reduces Conflict Between Co-Parents

AI tools like Coflo shift custody planning from a confrontational process to one centered on collaboration and problem-solving. By offering neutral, research-based insights, Coflo helps dispel the notion that custody decisions result in one parent "winning" and the other "losing." Instead, it acts as an impartial mediator, balancing the needs and constraints of both parents, such as work schedules and personal priorities.

In a pilot program in Orange County, the use of AI-driven parenting plans led to a 78% reduction in conflict-related communications among high-conflict parents within just six months. This success stems from the platform’s ability to help parents visualize compromises and understand how their decisions impact the family’s overall dynamic. By making the process clearer and less emotionally charged, Coflo reduces the likelihood of drawn-out disputes.

Coflo is also broadening its capabilities to include AI-assisted tone coaching for challenging co-parenting discussions. Paired with step-by-step custody plan guides, this feature aims to address both the planning and execution phases of custody arrangements, ensuring smoother transitions and fewer obstacles.

Conclusion

AI tools are transforming custody planning by bringing clarity, fairness, and data-driven insights to a process often influenced by emotion. When these tools are designed to meet legal standards, they help judges and parents focus on the most important aspect: the child's well-being.

Judges expect AI systems to align with the "best interests of the child" standard, evaluating objective factors such as work schedules, school calendars, and developmental needs. However, without clear transparency, courts will likely reject algorithms that lack accountability and hinder judicial oversight.

Platforms like Coflo demonstrate how legal standards can be translated into practical solutions. By prioritizing the child's needs, Coflo offers recommendations supported by research, developmental insights, and an interactive system that allows families to weigh their priorities effectively.

Data from Los Angeles County and Orange County highlights the impact of AI-driven custody plans: a 40% reduction in post-decree modifications and a 78% decrease in conflict communications. These results show how AI can reshape custody planning, but its success depends on systems remaining transparent, accountable, and focused on the child.